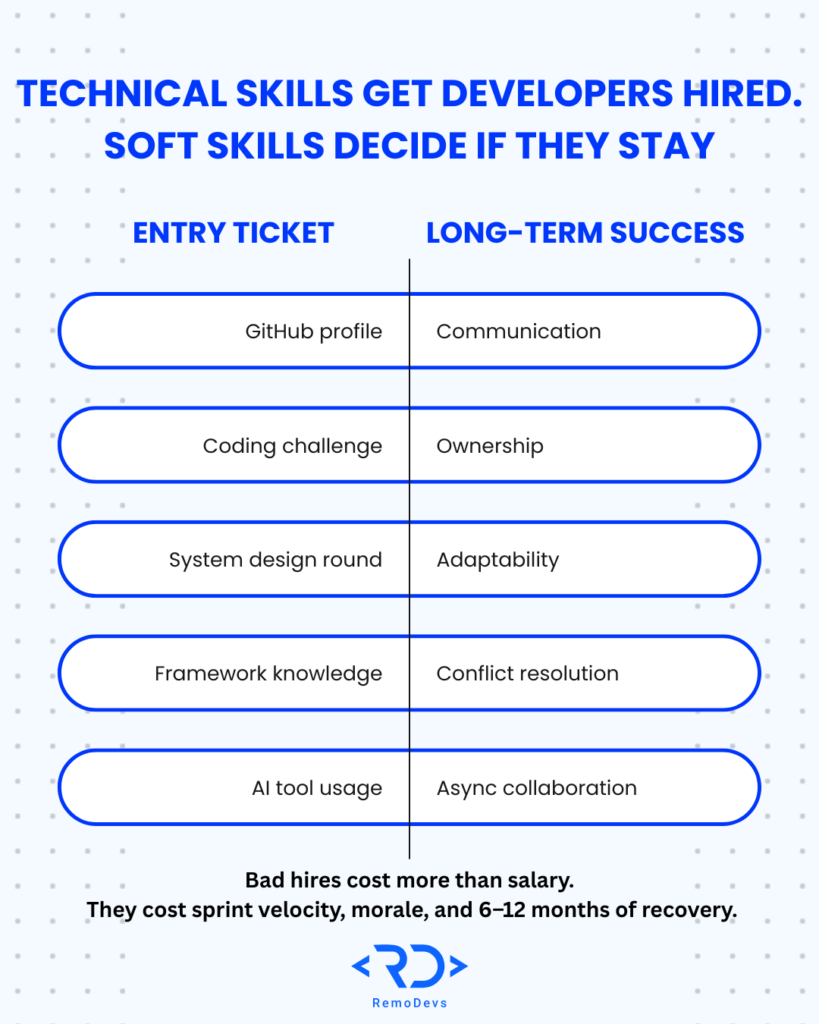

- Technical skills get a developer hired. Soft skills determine whether they last – and whether your team stays intact.

- In 2026, with AI coding assistants handling the boilerplate, human behavioral skills are the ultimate differentiator.

- Most hiring managers waste soft skills interviews on vague, easy-to-game questions. These 5 are designed to surface real behavior, not rehearsed answers.

- Each question targets a specific competency: communication, ownership, adaptability, conflict resolution, and async collaboration.

- If you’re hiring remote developers, soft skill screening is non-negotiable – and most agencies still skip it entirely.

Why Your Technical Interview Is Only Half the Equation

You’ve screened the GitHub profile. You’ve run the coding challenge. The candidate sailed through the system design round.

And then, three months in, you’re mediating a conflict between them and your product team, chasing them down for status updates, and wondering how you missed this.

You didn’t miss a technical red flag. You missed a behavioral one.

Historical research from Leadership IQ found that 46% of newly hired employees fail within 18 months – and in 89% of those cases, the reason was attitude, not skill. As we navigate the tech landscape of 2026, where AI tools have commoditized baseline coding capabilities, these numbers have only become more polarized. Poor collaboration, inability to accept feedback, and lack of accountability top the list of modern failure points.

For engineering teams specifically, the cost of a bad hire isn’t just the salary. It’s the lost sprint velocity, the team morale hit, and the 6–12 months it takes to course-correct.

This article gives you five battle-tested interview questions designed to screen for the soft skills that actually predict long-term developer performance in today’s highly automated, distributed environments – with the follow-up probes that separate honest answers from polished ones.

What “Soft Skills” Actually Means for Engineering Roles Today

Before we get into the questions, let’s be precise. “Soft skills” is a catch-all that often gets dismissed as fuzzy or unmeasurable. For engineering roles, it isn’t.

The competencies that matter most in technical hires are:

- Communication – Can they explain a complex technical decision (or why an AI-generated solution isn’t viable) to a non-technical stakeholder without condescension or confusion?

- Ownership – Do they treat deadlines and deliverables as their responsibility or someone else’s problem?

- Adaptability – How do they respond when requirements shift mid-sprint or a new framework suddenly disrupts their workflow?

- Conflict resolution – Can they push back professionally and accept being wrong?

- Async collaboration – For mature remote roles: can they operate with minimal supervision, leverage modern async tools effectively, and document their work proactively?

Each question below is mapped to one of these competencies.

5 Soft Skills Interview Questions That Actually Work

Question 1: “Tell me about a time you had to explain a technical decision to someone non-technical. How did you approach it?”

Competency: Communication

This is the most predictive question for developer–stakeholder friction. You’re not asking whether they can communicate – everyone says they can. You’re asking for a specific instance that reveals how they actually do it, especially when stakeholders assume technology (and AI) can “just do anything.”

What a strong answer looks like:

- They describe the audience first, then adjust their language accordingly.

- They use analogies, not jargon.

- They mention iteration: “I checked for understanding and adjusted.”

Red flags:

- Vague, non-specific answers (“I just explained it simply”).

- A tone of frustration toward the non-technical person.

- No mention of feedback or confirmation from the other party.

Follow-up probe: “What did the other person misunderstand first, and how did you handle that?”

Question 2: “Tell me about a project that failed or missed a deadline. What was your role in that?”

Competency: Ownership and Accountability

This question has one job: to see whether the candidate takes ownership or distributes blame. Most candidates expect it, but the follow-up is where the real signal comes from.

What a strong answer looks like:

- They claim a specific part of the failure as their own.

- They describe what they changed afterward – not what the team changed.

- They use “I” at least as often as “we” when describing the problem.

Red flags:

- An answer where every contributing factor is external (unclear requirements, the PM, the client).

- No behavioral change described post-failure.

- The story keeps getting reframed toward what others could have done better.

Follow-up probe: “If you could go back and change one decision you personally made, what would it be?”

Question 3: “Describe a time when your priorities shifted suddenly mid-project. How did you respond?”

Competency: Adaptability

Engineering environments change rapidly. Startups pivot. Enterprise clients reframe scope. A developer who locks up under ambiguity is a liability regardless of how clean their code is.

What a strong answer looks like:

- They describe the process of reprioritizing, not just the outcome.

- They mention proactive communication with stakeholders or the team.

- There’s evidence of structured thinking under pressure (e.g., “I mapped out what could be deferred without risk”).

Red flags:

- Heavy emphasis on how disruptive the change was with no adaptive action.

- They waited to be told what to do rather than proposing a path forward.

- No communication with the broader team during the chaos.

Follow-up probe: “What information did you have to gather quickly, and who did you go to first?”

Question 4: “Tell me about a time you disagreed with a technical decision made by a teammate or manager. What did you do?”

Competency: Conflict Resolution and Professional Pushback

You want engineers who advocate for good solutions – not ones who either capitulate silently or bulldoze the room. This question surfaces how they handle professional disagreement.

What a strong answer looks like:

- They raised the disagreement directly with the person involved, not around them.

- They focused on the decision, not the person.

- They describe accepting the final call if they were outvoted – without passive resistance.

Red flags:

- They “just went along with it” without raising the concern at all.

- They escalated immediately without trying peer-level resolution first.

- The story ends with them being proven right and the other person being wrong – every time.

Follow-up probe: “How did that person respond when you brought it up, and how did you leave things?”

Question 5: “Walk me through how you keep your teammates informed when you’re working remotely on a complex task.”

Competency: Async Collaboration

For distributed teams – especially when hiring developers across time zones – this is the question that separates functional remote workers from people who disappear into their work and resurface with deliverables (or excuses) three days later.

What a strong answer looks like:

- They have a system, not just good intentions (e.g., “I update the ticket, ensure our AI summarizer captures my daily async notes, and flag blockers immediately”).

- They mention proactive over-communication as a deliberate habit.

- They show awareness of how their silence affects team coordination.

Red flags:

- “I just message people when something comes up.”

- No mention of documentation or structured updates.

- Reliance on synchronous check-ins as their primary communication loop.

Follow-up probe: “Tell me about a time when your remote communication broke down. What happened and what did you put in place after?”

A Quick-Reference Scoring Framework

Use this rubric during debriefs to standardize evaluation across interviewers:

| Competency | Green Flag | Yellow Flag | Red Flag |

| Communication | Audience-first, iterative, checks for understanding | Clear but one-directional | Jargon-heavy, dismissive of audience |

| Ownership | Claims specific failures, shows behavioral change | Partial ownership, vague improvement | Blame-first, no self-reflection |

| Adaptability | Structured pivot, proactive comms | Waits for direction, eventually adjusts | Locks up, focuses on disruption |

| Conflict Resolution | Direct, respectful, accepts final decisions | Raises issues but indirectly | Silent resentment or immediate escalation |

| Async Collaboration | Documented system, proactive updates | Ad hoc but functional | Reactive only, no documentation habit |

Note: Score each dimension 1–3. Any candidate scoring 1 in two or more categories should not move forward regardless of technical performance.

The Biggest Mistake in Soft Skills Interviews

Most hiring managers ask these questions once and accept the first answer.

That’s not an interview. That’s a conversation.

The follow-up probe is where the behavioral signal emerges. A polished candidate can give a credible surface-level answer to every question above. It’s the second and third layer – the specifics of who, when, what changed – where you find out whether the story is real or rehearsed.

Also: cross-reference answers across the interview. If a candidate describes themselves as highly collaborative in Question 5 but their answer to Question 4 shows a pattern of silent disagreement, you’ve found an inconsistency worth probing.

Soft skills screening is a skill in itself. It requires preparation, consistency across interviewers, and a calibrated rubric – which most engineering teams don’t have the bandwidth to build from scratch.

How RemoDevs Eliminates This Problem Before the Interview Ever Starts

Here’s an honest observation: the five questions above are only useful if you’re seeing the right candidates in the first place.

If you’re reviewing 40 profiles to find 3 worth interviewing, and your screening process is a resume filter plus a quick call, you’re not screening – you’re gambling.

At RemoDevs, soft skill assessment isn’t a final step. It’s baked into every stage of our process.

We filter out 90% of candidates before they reach your desk. That’s not a marketing claim – it’s our operating standard. Our process includes:

- A structured behavioral interview conducted by our senior recruiters, using competency-mapped questions aligned to your team’s specific working style.

- Communication assessments that evaluate written async clarity – a non-negotiable for distributed teams in 2026.

- Culture-fit profiling based on your engineering culture, not a generic rubric.

- Reference-backed validation of the behavioral signals we surface.

The result: when you see a RemoDevs shortlist, you’re looking at the top 10% of vetted candidates – developers who’ve already passed the same quality bar this article describes.

You’re not running the gauntlet. You’re choosing between finalists.

That’s not outsourcing. That’s precision hiring.

Stop Screening. Start Choosing.

Your time as a CTO, VP of Engineering, or Founder is worth more than 40 resume reviews and 12 inconclusive first-round calls.

If you’re scaling your engineering team – whether that’s one critical hire or an entire squad – let’s talk for 15 minutes.

We’ll show you an active shortlist of pre-vetted developers, matched to your stack, your time zone, and your working culture.

No pitch deck. No discovery questionnaire with 30 fields. Just a direct conversation about your requirements and our current talent pool.

👉Book your 15-minute discovery call with RemoDevs – and see the top 10% before your competition does.

Visit us

Find a moment in your calendar and come to our office for a delicious coffee

Make an apointment